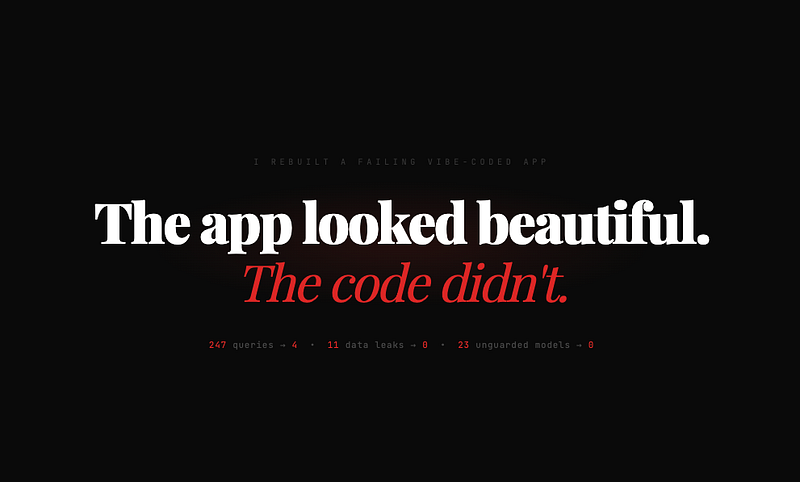

He shipped more code than anyone on the team. He also created more bugs, more tech debt and more production incidents than everyone else combined.

I need to be precise about what “fired” means here. I did not fire a person. I fired a tool. Then another tool. Then another. Over 18 months, my team went through four AI coding assistants before finding the one that actually made us better.

This is the story of that journey. From GitHub Copilot to JetBrains Junie to OpenAI Codex to Claude Code on the Max plan. Each one was faster than the last. Only the last one made our code better.

Phase 1: GitHub Copilot (January 2024 — February 2025)

Copilot was our first hire. $10 per month per developer on the Pro plan. It lived inside VS Code and PhpStorm, autocompleting lines and suggesting blocks of code.

The honeymoon was immediate. Junior developers were producing code faster. Pull requests came in quicker. The team felt productive. We were shipping.

Then we started reviewing what was being shipped.

Copilot’s suggestions for Laravel were competent but generic. It would generate a controller that worked but ignored our project’s conventions. It used $guarded = [] on every model. It created migrations with float columns for monetary values. It never added database indexes. It suggested DB::raw() where Eloquent methods existed.

None of these were dealbreakers individually. Together, they created a pattern. The codebase was slowly filling with code that looked right but was structurally wrong.

The breaking point was a production incident in June 2024. A developer accepted a Copilot suggestion for a tenant-scoped query that forgot the tenant scope. Data from one client appeared in another client’s dashboard. It took us four hours to find because the code “looked” correct. It had been reviewed by another developer who also did not notice. Because it looked like something a competent developer would write.

That was the lesson. Copilot’s danger is not that it writes bad code. The danger is that it writes code that looks good enough to pass review.

What Copilot was good at: Autocompleting repetitive patterns. Generating boilerplate. Speeding up typing.

What Copilot got wrong: Everything that required context about our specific application. Business rules, naming conventions, security patterns and architectural decisions.

Why we moved on: Copilot is a line-level tool. It completes the next line. It does not understand the project, the domain or the existing patterns. For simple scripts, that is fine. For a multi-tenant SaaS application handling financial data, it was not enough.

Phase 2: JetBrains Junie (March 2025 — July 2025)

Junie launched in January 2025 as an early access program for IntelliJ IDEA and PyCharm. We waited. PhpStorm support arrived around June 2025. We jumped in immediately.

Junie promised something different from Copilot. Instead of autocompleting lines, it could handle entire tasks. Generate CRUD screens. Write tests. Run code inspections. It was a coding agent, not an autocomplete engine.

Junie’s advantage was that it was native to PhpStorm. It used the IDE’s code navigation, project structure and inspections to plan multi-step tasks. When you gave it an instruction, it would create a plan, execute it step by step, then show you the results.

Jeffrey Way from Laracasts tried it publicly and praised how well it showed its work. That transparency was real. You could watch Junie search files, read your existing code and decide where to make changes.

But Junie had problems specific to our workflow.

First, speed. Junie was methodical. It would plan, then search, then verify, then execute. A task that took Claude Code 4 minutes would take Junie 12–15. For small fixes, the overhead was not worth it. We found ourselves using the AI Assistant for quick completions and Junie for bigger tasks. Two tools for two scales of work.

Second, the credit system. JetBrains moved to a credit-based quota model in mid-2025. Each AI Credit equals $1 USD. The AI Pro plan costs about $10/month and gives you $10 in credits. AI Ultimate is about $30/month with $35 in credits. That sounds reasonable until you realize that agentic workflows consume tokens fast. Junie collects context, iterates through plans, makes multiple long requests. On one sprint, a developer used Junie for three days of intensive scaffolding and burned through the monthly credits before Friday. The JetBrains community forums were full of similar complaints.

Third, Laravel-specific awareness was limited at the time. Junie knew PHP, but it did not deeply understand Laravel’s conventions. It would use Eloquent methods from older versions. It did not understand our tenant scoping pattern. MCP support and Laravel Boost compatibility came later, which addressed much of this.

Junie was better than Copilot. It thought in tasks, not lines. But it was slow, expensive at heavy usage and not Laravel-aware enough for our needs at the time.

What Junie was good at: Multi-file changes. Test generation. Showing its reasoning process transparently.

What Junie got wrong: Speed on smaller tasks. Laravel-specific conventions (at launch). Credit consumption on complex workflows.

Why we moved on: By the time MCP support and Boost compatibility arrived, we had already found something faster.

Phase 3: OpenAI Codex (June 2025 — October 2025)

Codex was the opposite of Junie. Fast, aggressive and confident. OpenAI launched Codex in May 2025 as a cloud-based coding agent. Codex CLI followed shortly after as an open-source terminal tool. It was powered by codex-1, a version of o3 optimized for software engineering, and later upgraded to GPT-5-Codex and then GPT-5.2-Codex.

Codex was included with ChatGPT Plus at $20/month. The same price as Claude Pro, but bundled with all of ChatGPT’s other features. For the price, the raw output volume was impressive.

Codex CLI ran in the terminal. You gave it a task, it executed. No elaborate plans. No step-by-step transparency. Just results. Fast results.

For the first month, it felt like magic. A developer could describe a feature in two sentences and have a working implementation in minutes. Codex would edit multiple files, run commands and even execute tests. The speed was addictive.

Then the bugs started appearing.

Codex’s confidence was the problem. When it did not know the answer, it did not say so. It generated code that looked plausible but was wrong in subtle ways. A service class that used a deprecated API. A migration that assumed PostgreSQL syntax when we run MySQL. An event listener that was syntactically valid but logically circular.

The worst incident was a Codex-generated payment processing flow that reversed the order of operations. It charged the customer before validating the invoice total. In testing, the amounts were always correct so nobody noticed. In production, a race condition caused one customer to be charged twice in the same second. That was an expensive bug.

Codex was also not Laravel-aware in the way we needed. It knew Laravel existed. It could generate standard Eloquent queries. But it did not understand our application. Our naming conventions. Our tenant model. Our integer-cents financial pattern. Every Codex-generated feature needed manual reshaping to fit our codebase.

The speed was real. But speed without accuracy is just generating bugs faster.

What Codex was good at: Raw speed. Multi-file changes. Running tests automatically. Broad language support.

What Codex got wrong: Application-specific context. Financial logic. Admitting when it did not know something.

Why we moved on: Codex built fast but built wrong. The time saved on generation was spent on debugging.

Phase 4: Claude Code + Boost on Max (October 2025 — Present)

I almost did not try Claude Code. By October 2025, I was skeptical of all AI coding tools. Three failures in a row will do that.

What changed my mind was Laravel Boost. When Boost shipped as a public beta in August 2025, it was designed specifically to work with agents like Claude Code and Cursor. An MCP server that gives the agent deep access to your Laravel application. Schema, routes, models, configs, logs, version-specific documentation. Everything.

I installed Boost on one project. Connected Claude Code. Gave it a task I had given to every previous tool: “Add a payment reminder system with configurable intervals per tenant.”

The difference was immediate.

Claude Code with Boost read my database schema before generating anything. It saw that my financial columns use integer cents. It saw the BelongsToTenant trait. It saw that my Form Requests follow a specific naming pattern. The generated code fit my application like it was written by someone on my team.

But what sold me was not the generated code. It was how Claude Code handled uncertainty.

When the task was ambiguous, Claude Code asked questions. “Your invoices table has both a status column and a paid_cents column. Should the reminder logic check the status, the paid amount or both?” No other tool had done this. Copilot guessed. Junie assumed. Codex confidently picked one. Claude Code asked.

We subscribed to the Max 20x plan at $200/month. Not cheap. But the math was simple. If Claude Code saves each developer 4 hours per week, at our billing rates, the subscription pays for itself on day one.

What Claude Code gets right:

Application awareness through Boost. It reads before it writes. When it generates a migration, the column types match your existing schema. When it creates a model, it applies the same traits your other models use.

Extended context. Opus 4.6 has a 1 million token context window in beta with up to 128K output tokens. For a monolith with 200+ files, this matters. The agent can hold your entire application structure in context while making changes across multiple files.

Honesty about uncertainty. When Claude Code is not sure about a business rule, it asks. This single behavior prevented more bugs than any other feature.

The review workflow. Claude Code generates changes and presents them as diffs. You review, accept or modify. It feels like reviewing a pull request from a capable junior developer, not fighting with an autocomplete engine.

What Claude Code still gets wrong:

Performance optimization. It writes correct code but rarely optimal code. Chunking large queries, caching expensive calculations and indexing strategies still need human input.

Complex state machines. Multi-step workflows with conditional branching are better written by a human who understands the business process. Claude Code can implement the mechanical parts, but the logic design needs to come from you.

Frontend work. Our Livewire and Blade components are simple enough, but Claude Code’s CSS suggestions are generic. We still write all frontend styling by hand.

The Numbers: Pricing and Intelligence Compared

After using all four tools, here is the honest comparison as of February 2026.

What It Costs

GitHub Copilot Free: $0. 2,000 completions, 50 premium requests. Pro: $10/month. Unlimited completions, 300 premium requests. Pro+: $39/month. 1,500 premium requests, all models including Claude and o3.

JetBrains Junie AI Free: $0. Limited credits, code completion via Mellum. AI Pro: ~$10/month. $10 in AI credits (Junie + AI Assistant share credits). AI Ultimate: ~$30/month. $35 in AI credits, higher model access.

OpenAI Codex ChatGPT Plus: $20/month. 30–150 cloud messages per 5 hours, Codex CLI included. ChatGPT Pro: $200/month. 300–1,500 messages per 5 hours, priority access.

Claude Code Pro: $20/month. Claude Code access, shared with Claude.ai usage. Max 5x: $100/month. 5x Pro limits, full Claude Code. Max 20x: $200/month. 20x Pro limits, priority access, Opus 4.6.

A few things stand out. Copilot Pro at $10/month is the cheapest real option. Codex at $20/month through ChatGPT Plus is good value if you also use ChatGPT for other work. Claude Code Pro at $20/month gives you access but usage limits are shared with your Claude.ai chat, which can be tight. The serious tiers ($100–200/month) are where you get enough runway for daily agentic coding.

JetBrains’ credit model deserves special attention. The credits sound straightforward (1 credit = $1 USD), but agentic tasks burn through them fast. One heavy Junie session can consume $5–10 in credits. At $35/month on Ultimate, that is about 3–7 intensive sessions before you need to top up. The community frustration around this is real.

How Smart They Are

This is harder to quantify because “intelligence” depends on what you are asking for. But here is what I observed across our Laravel projects.

Code completion accuracy (single-line suggestions):

Copilot and JetBrains AI Assistant are roughly equal here. Both handle autocomplete well for standard PHP and Laravel patterns. Codex and Claude Code do not play in this space. They are agents, not autocomplete tools.

Feature-level code generation (multi-file, multi-step tasks):

This is where the differences are stark. Junie SWE-bench Verified score at launch was 53.6%. Respectable but behind the leaders. It has since improved with newer model updates and MCP support. Codex powered by GPT-5.2-Codex is strong on general coding tasks. Claude Opus 4.5 hit 80.9% on SWE-bench Verified, which was a record when it was measured. Opus 4.6 is the current model and likely improved further, though Anthropic has not published a direct SWE-bench number for it yet.

But benchmarks do not tell the full story for Laravel work. What matters is:

Does the agent understand Laravel conventions? With Boost: Claude Code and Junie (via MCP) both perform well. Without Boost: none of them are reliably Laravel-aware. Codex and Copilot generate generic PHP that needs reshaping.

Does it ask when it does not know? Only Claude Code consistently did this for us. The others guessed or assumed. This is not a benchmark score. It is a workflow behavior that prevents production bugs.

Does it handle financial logic correctly? None of them do by default. Every tool generated float columns for money until we told it not to. Our custom Boost guideline solved this for Claude Code. The others had no equivalent mechanism at the time we used them.

Context window for large codebases: Opus 4.6 offers 1M tokens (beta), which can hold a significant Laravel monolith in context. GPT-5.2-Codex has a large context window too, but Codex Cloud runs tasks in isolation so the effective context is per-task. Junie has access to PhpStorm’s project index, which is a different (and effective) approach to context.

My Summary

Copilot → Best for autocomplete. Instant speed. Low Laravel awareness. Does not ask when unsure. Wrong defaults on financial logic. $10–39/month. Low credit anxiety.

Junie → Best for IDE-native tasks. Slow but methodical. Medium Laravel awareness (with MCP). Rarely asks when unsure. Wrong defaults on financial logic. $30+/month plus top-ups. High credit anxiety.

Codex → Best for fast generation. Very fast. Low Laravel awareness. Does not ask when unsure. Wrong defaults on financial logic. $20–200/month. Medium credit anxiety.

Claude Code + Boost → Best for Laravel-aware development. Fast. High Laravel awareness (with Boost). Asks when unsure. Correct financial logic (with guidelines). $100–200/month. Medium credit anxiety.

What the Journey Taught Me

The progression from Copilot to Claude Code was not just about better models. It was about a fundamental shift in what AI coding tools should do.

Copilot: Completes your typing. Saves keystrokes. Junie: Plans and executes tasks. Saves scaffolding time. Codex: Builds features fast. Saves implementation time. Claude Code + Boost: Understands your application and builds features that fit. Saves rework time.

The first three tools made us faster. The last one made us better. There is a meaningful difference.

Faster means more code per hour. Better means less time fixing what was generated. Faster fills your codebase with code that needs reviewing. Better fills it with code that passes review.

The “fastest AI-assisted developer” was Codex. It produced more lines of code per hour than any tool we used. It was also responsible for two production incidents and dozens of bugs that slipped through review because the code was plausible.

We fired it. Best decision we ever made.

My Recommendation in 2026

If you are a Laravel developer choosing an AI coding tool right now, here is my honest ranking:

For serious Laravel work: Claude Code on Max ($100–200/month) with Laravel Boost installed. The combination of application-aware context and a model that asks questions instead of guessing is unmatched. The $100 Max 5x tier is sufficient for most developers. Go $200 Max 20x if you code 6+ hours daily.

For budget-conscious teams: Claude Code on Pro ($20/month) with Boost. You get the same application awareness with lower usage limits. Good enough for most solo developers and small teams. Just be aware that usage is shared with your Claude.ai chat.

For PhpStorm purists: Junie has improved significantly since we used it. MCP support means it can now use Laravel Boost for full application context. If you refuse to leave PhpStorm and can manage the credit budget, Junie is a solid option. Consider the AI Ultimate plan ($30/month) and budget for occasional top-ups.

For autocomplete only: Copilot Pro at $10/month is hard to beat for pure code completion. It does what it does well. Just review everything it suggests and do not trust it with business logic.

The tool matters less than the workflow. Install Laravel Boost. Write clear specs. Review every generated line. The AI writes the first draft. You make sure it actually works.