The acronym shows up in checklists, client audits and enterprise SOWs. Developers nod along. Then nobody can say what phase they are in.

The client audit arrived as a 40+ page PDF document. Somewhere around those pages was a section titled “Secure Software Development Lifecycle Compliance.” Under it, a checklist. Twelve items. Each one asking whether we had implemented a specific phase or control.

I read through it carefully. Then I read it again.

We were doing most of it. Not because someone had handed us a framework to follow. Because some of it is just how careful teams build software. But we were also missing parts, and the parts we were missing were not random. They were the parts that only show up when you treat security as a lifecycle concern rather than a testing phase.

That audit was the first time I had to sit with SSDLC as a formal concept rather than a vague acronym. What I found is that the framework is less alien than it looks, and the gap between what most teams do and what SSDLC actually requires is smaller than most compliance documents suggest.

But the gap is real. And in government and enterprise projects where SSDLC appears in nearly every SOW and procurement checklist, that gap has consequences.

What SSDLC Actually Is

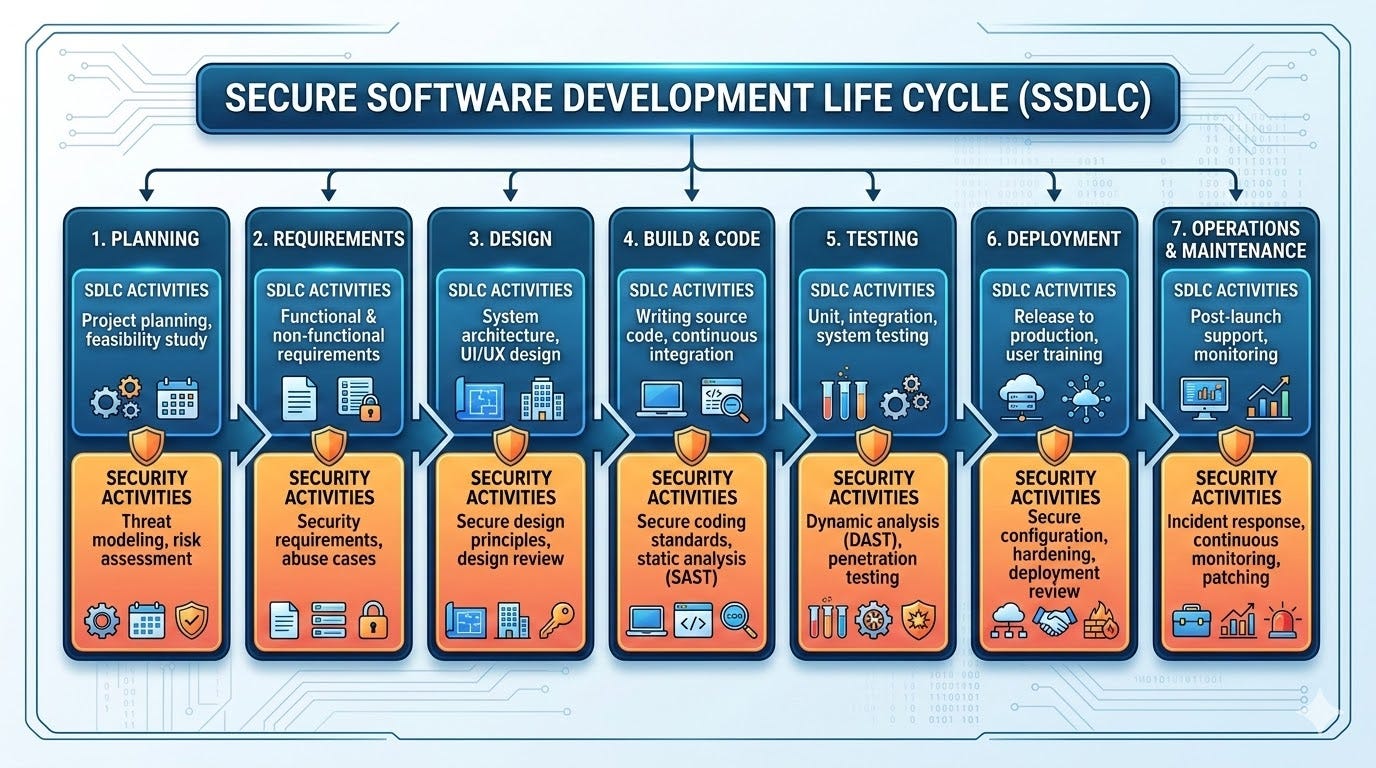

Secure Software Development Lifecycle is not a product you install or a process you bolt on to what you are already doing. It is a description of how security thinking gets embedded into every phase of software development instead of sitting at the end as a testing checkbox.

The traditional SDLC is: plan, design, build, test, deploy, maintain. Security in the traditional model lives almost entirely in the test phase. A penetration test gets scheduled close to launch. A code review happens before release. The security team sees the product for the first time when it is nearly finished. At that point, finding a serious architectural vulnerability means expensive rework or shipping with known risk. Both happen regularly.

SSDLC shifts that. Security requirements get defined at the start. Threat modeling happens during design, not after. Secure coding practices apply during implementation. Testing is continuous, not a gate before deployment. And after launch, there is a defined process for responding to vulnerabilities that are discovered in production.

The most widely cited framework for this is NIST SP 800–218, the Secure Software Development Framework (SSDF), published in February 2022. It organises practices into four groups: Prepare the Organization, Protect the Software, Produce Well-Secured Software and Respond to Vulnerabilities. OWASP maps similar concerns to their development phases: Requirements, Design, Implementation and Verification.

Neither framework is prescriptive about tools or exact methods. Both describe outcomes. What does security look like at each stage? What evidence would demonstrate it? That flexibility is intentional and also why so many teams read the framework and walk away confused about what to actually do.

The Phase That Gets Skipped Most: Requirements

Most developers associate security with the implementation phase. Write secure code. Validate inputs. Hash passwords. Use parameterised queries. That is correct but incomplete.

Before a line of code is written, the security requirements for the system should exist. Not as a vague mandate to “be secure” but as specific, testable statements.

What data does this system handle? Is any of it personal data under PDPA? Does it involve financial transactions? Does it need audit logging? Who are the different user roles and what can each one access? What happens if a user escalates their own privilege? What constitutes a failed authentication and how should the system respond?

These are requirements questions, not implementation questions. If your development team is discovering the answers to these during coding, you have already lost the most valuable phase of SSDLC. Retrofitting access control logic into a half-built system is expensive and error-prone. Designing it from the start takes a fraction of the time.

In practice, this means security requirements sit in the same document as functional requirements. They are written at the same time. They are reviewed by the same people. A login feature does not just have requirements about what happens when credentials are correct. It has requirements about lockout thresholds, session expiry, password policies and what gets logged on failure.

Most teams I have worked with do not write security requirements as requirements. They write them as comments in code or discover them after a client raises a concern or infer them from whatever the framework provides as a default. That is not the same thing.

The Phase That Separates Good Teams: Design

Threat modeling is the core security activity of the design phase. It is also the activity that most teams either skip entirely or do informally without knowing that is what they are doing.

Threat modeling asks: given how this system is designed, what can go wrong? Who might attack it, what would they want and what paths exist to get it?

STRIDE is the most commonly used framework for this. It stands for Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service and Elevation of Privilege. It was developed by Microsoft and remains the most practical structured approach for developers who are not security specialists. For each component in your architecture, you ask which STRIDE categories apply and what mitigations address them.

You do not need a dedicated security team to do threat modeling. You need a developer who understands the system and is willing to think adversarially about it for an hour or two. The output is a list of identified threats and the design decisions made to address them. That list becomes part of the design documentation. It is also evidence for auditors.

The design phase is also where you make architectural decisions that are very hard to reverse later. Does this service need direct database access or can it go through an API? Does this user role need write access to that table or only read? Does this endpoint need to exist as an unauthenticated route or can authentication be required?

Every one of those decisions is a security decision. Making them during design costs very little. Making them during a security audit after launch costs a great deal.

Implementation: The Phase Developers Actually Know

This is where most developers feel at home with security because it maps to things they already do. Input validation. Parameterised queries. Password hashing with bcrypt or Argon2. HTTPS enforcement. Avoiding hardcoded credentials.

What SSDLC adds to the implementation phase is discipline and tooling.

Static Application Security Testing (SAST) tools analyse source code for known vulnerability patterns without executing the code. In a Laravel codebase this might be Psalm with security plugins or a tool like Enlightn. In Python it might be Bandit. These run in your CI pipeline and flag issues on every commit, not just before release.

Software Composition Analysis (SCA) scans your dependencies for known vulnerabilities. If you run composer install and pull in a package with a CVE, SCA tells you. Running composer audit gives you a built-in version of this as of Composer 2.4, no extra tooling required. GitHub Dependabot does this automatically for public repositories.

Secure coding standards exist at the implementation phase too. OWASP publishes a Secure Coding Practices Quick Reference Guide that covers input validation, output encoding, authentication, session management, access control, cryptography, error handling and logging. It is not long. Most experienced developers will recognise most of it. The ones they do not recognise are usually the most important.

Code review at this phase is not just about correctness and style. It is about security assumptions. Does this PR introduce a new endpoint without authentication? Does this query construct SQL strings rather than using parameter binding? Is this error message leaking stack traces to the client? These are implementation phase security questions and they belong in code review, not in a pen test six months later.

Verification: The Phase That Proves It Works

Security testing in most projects means a penetration test booked close to launch. That is better than nothing. It is also the most expensive and least useful time to find serious issues.

SSDLC verification is continuous. It starts during development and continues through every release.

Dynamic Application Security Testing (DAST) tests a running application from the outside, the way an attacker would. OWASP ZAP is the most widely used open-source DAST tool. It crawls your application, sends malformed inputs and looks for vulnerability patterns in responses. It can run against a staging environment as part of a CI/CD pipeline.

Penetration testing still has a role but it is a deeper, manual investigation for specific threat scenarios, not the first time security is assessed. A penetration test on a system that has had continuous security testing throughout development finds more interesting issues and fewer basic ones.

Security review of infrastructure configuration also lives here. Are your S3 buckets private by default? Are your security groups following least privilege? Is your database accessible from the internet? These are verifiable before production and should be.

Operation and Response: The Phase Nobody Plans For

Most SSDLC discussions stop at deployment. SSDLC does not.

After a system is live, vulnerabilities will be discovered. Sometimes by your team, sometimes by researchers, sometimes by attackers. How your organisation handles that discovery is a defined part of the lifecycle.

A vulnerability response process at minimum includes: a way for vulnerabilities to be reported (internally and externally), a triage process that determines severity and urgency, a remediation workflow that gets fixes into production and a communication plan for affected users or stakeholders when required.

Most development teams have none of this defined. There is no /security.txt file on the server. There is no email address for security reports. There is no documented process for what happens when a developer discovers a critical bug in production at 11pm. These are not hard to define. They are just not written down because nobody asked.

Logging and monitoring also live in this phase. You cannot respond to what you cannot detect. Application logs that capture authentication failures, privilege escalation attempts and unusual query patterns are not just debugging tools. They are the early warning system for active attacks.

The Contrarian Point

Here is what most SSDLC compliance documentation will not say directly.

SSDLC is not a process you adopt from outside. It is a description of what disciplined teams already do in fragments. The teams that feel SSDLC is completely foreign to their work are the teams that have been treating security as someone else’s problem. The teams that find most of it familiar are the ones who have been building carefully all along, just without a name for it.

The framework does not teach you to write secure code. It provides a structure for making security practices consistent, documented and verifiable across the entire lifecycle. That is what auditors need. That is what clients are actually asking for when they write SSDLC into a tender requirement. Not magic. Evidence.

The gap between what most development teams do and what a mature SSDLC looks like is usually in three places: security requirements are not written down as requirements, threat modeling does not happen formally and there is no defined response process for post-launch vulnerabilities. Everything else is often closer than teams realise.

If you are a developer sitting in a meeting where someone is asking about SSDLC compliance, the right first question is not “which framework do we use.” It is: “where in our process do we currently write down security requirements, and where is our process for responding to vulnerabilities discovered after launch?”

The answer to those two questions tells you most of what you need to know about the actual gap.

The Practical Starting Point

If your team wants to move toward SSDLC without buying a tool, hiring a consultant or disrupting your current delivery process, start here.

In the next sprint, add security acceptance criteria to at least one user story. Not “system shall be secure.” Specific and testable: “unauthenticated requests to this endpoint return 401,” “failed login attempts over five within ten minutes trigger a lockout,” “all database queries use parameterised statements.”

Run composer audit or the equivalent dependency audit for your stack on every build. Fix the critical findings. Document why you accepted any you chose not to fix.

Add one STRIDE walkthrough to your next architectural decision meeting. You do not need a facilitator. You need someone to ask: for each component we are adding, which of these six threat categories applies and what is our mitigation?

Define who owns security incidents on your team. Not in a meeting. In a document. Who gets called when a critical vulnerability is found in production at 11pm on a Friday? Who has access to roll back a deployment? Who communicates to the client? Write those names and steps down. Most teams assume everyone knows. Nobody does until it happens.

Define what happens when someone finds a critical vulnerability in your production system at 11pm on a Friday. Write it down. Who gets called? Who has production access? What is the rollback plan?

None of that requires SSDLC certification. None of it requires a security team. All of it moves you closer to what that forty-page audit checklist is actually looking for.

The acronym is intimidating. The underlying idea is not. Build security in from the start, check your assumptions as you go, test continuously and have a plan for when things go wrong anyway.

That is it. Everything else is documentation.